Multi-browser and multi-environment testing in Serenity BDD

Sometimes we might want to run the same test in different environments, or on different browsers, and still see each test run appear in the reports. The latest version of Serenity BDD allows you to implement multi-browser and multi-environment testing using the notion of contexts. A context is a way of running the same test several times, and showing all of the results in the same report.

Simple test contexts

For example, you could run your tests using the Chrome WebDriver, providing a context called "chrome"

$ mvn verify -Dcontext=chrome -Dwebdriver.driver=chrome

You might then run the tests again (or in parallel, on a different machine) using Firefox:

$ mvn verify -Dcontext=firefox -Dwebdriver.driver=firefox

You might also run the tests a third time using PhantomJS:

$ mvn verify -Dcontext=phantomjs -Dwebdriver.driver=phantomjs

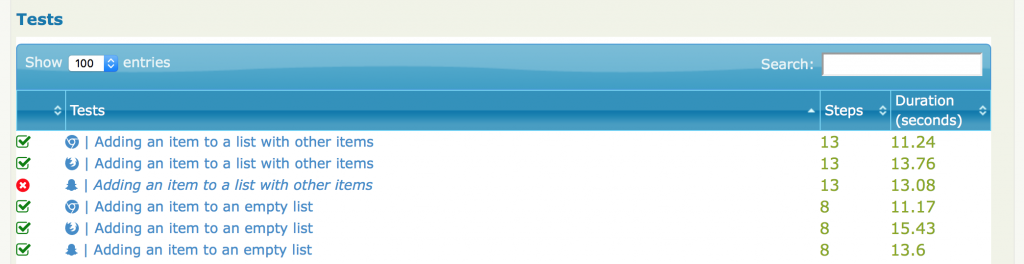

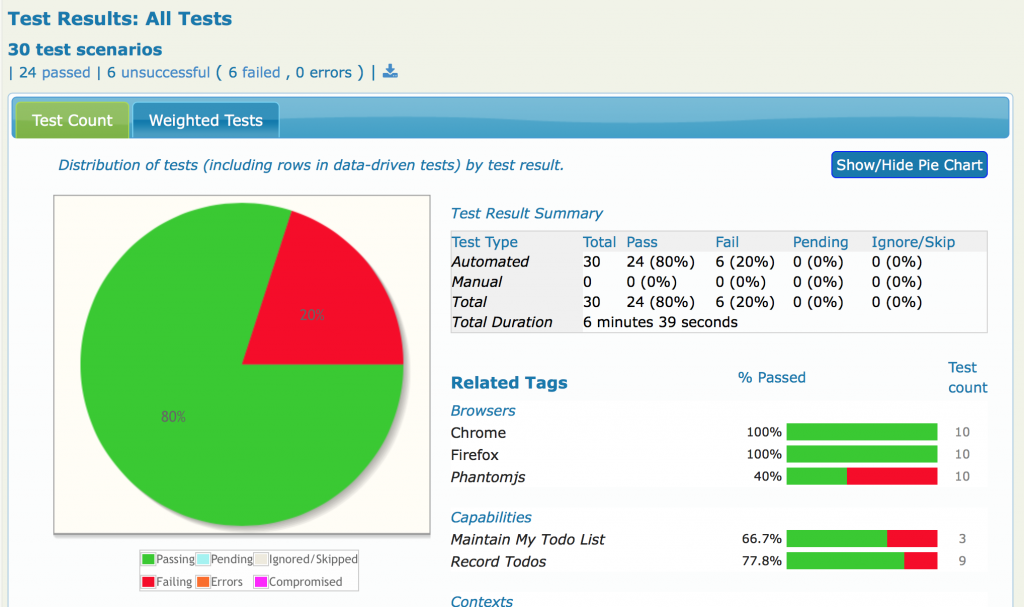

Serenity will now include test runs for all three browsers in the same report:

Adding more readable tags

Serenity will add a "context" tag to each of your tests, but you might want to make your reports even clearer by adding a more meaningful tag. You can do this using the "injected.tags" system property:

$ mvn verify -Dcontext=chrome -Dwebdriver.driver=chrome -Dinjected.tags="browser:chrome"

This way, you would get tags for both the browsers and the test contexts appearing in the reports:

Serenity BDD supports multi-browser and multi-environment testing.

(You can disable the context tag entirely if you prefer by setting the serenity.add.context.tag property to false).

Cross-browser and cross-OS tests

There are a couple of common use-cases for contexts: running the same test in different browsers, and running the same test on different operating systems. If you use the name of a browser (e.g. "chrome", "firefox", "safari", "ie"), the context will be represented in the reports as the icon of the respective browsers. If you provide an operating system (e.g. "linux", "windows", "mac", "android", "iphone"), a similar icon will be used. If you use any other term for your context, it will appear in text form in the test results lists, so it is better to keep context names relatively short.

Aggregating test results

You may also choose to run a large test suite on several machines in parallel, and then merge the reports together. You can do this simply by

- Providing a different context for each test run;

- Copying the contents of the target/site/serenity directories into the target/site/serenity directory of your main project, and

- Generate the aggregate reports from this main project (using "mvn serenity:aggregate" or "gradle aggregate").

These features will work with version 1.4.0 of serenity-core.

RELATED EVENTS AND WORKSHOPS

Advanced BDD Test Automation Workshop

Set your team on the fast track to delivering high quality software that delights the customer with these simple, easy-to-learn sprint planning techniques! Learn how to:

Set your team on the fast track to delivering high quality software that delights the customer with these simple, easy-to-learn sprint planning techniques! Learn how to:

- Write more automated tests faster

- Write higher quality automated tests, making them faster, more reliable and easier to maintain

- Increase confidence in your automated tests, and

- Reduce the cost of maintaining your automated test suites